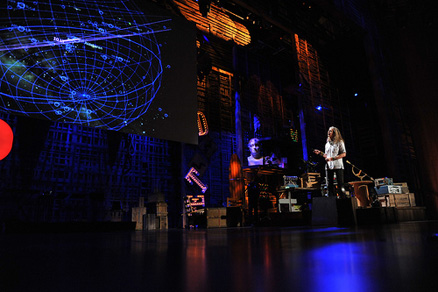

Once more, I heard Benedetta’s voice in my head saying it’s all about the user interaction and it made me rethink my first thoughts for a final project. My idea from last week was not strong enough as a user experience nor did it have as much interactive storytelling at its core. As I brainstormed and fleshed out new ideas, images of Carter Emmart’s incredible visualization of the universe kept popping back into my mind.

Although I have never formally studied astronomy on even a college level, it was a subject matter that blew my mind when I was a kid. I read every single book on space in my elementary school library and moved onto any book I could get my hands on about aliens. My memories of the Barney and Betty Hill alien abduction story from the 50’s, Roswell and Area 51 all still excite me. And then it clicked. I want to build an alien encounter!

In my research I came across everything from SETI, the Search for Extraterrestrial Intelligence, to an amazing Atlantic article about Robert Gray and his book on the Wow! moment – in 1977 when we received a radio signal that has yet to be explained away as simple interference. It has not been proven to be extraterrestrial, but there is still no other official explanation.

THE IDEA:

An organization searching through space for alien life sends out signals with an array of radio telescopes. And an alien answers back.

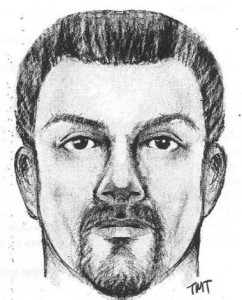

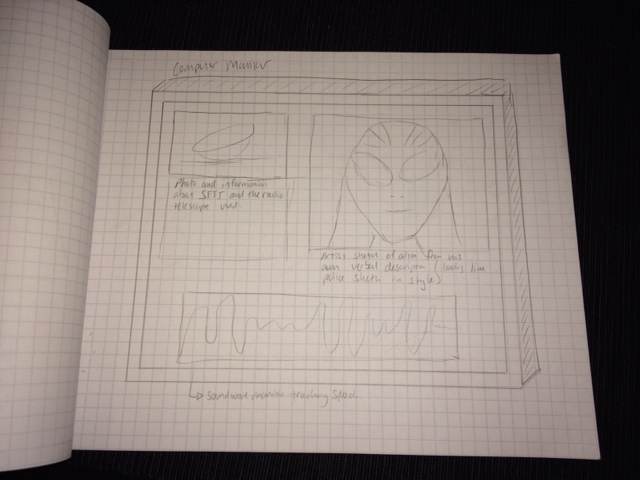

By the time of the user interaction, the alien has been communicating with us for some time. A translation system has already been built to better understand each other. The alien has also described himself to an artist so a sketch has been made and placed on the screen (in the style of police sketches of subjects).

But obviously the image is of a much more impressive alien than one of a mere human. The computer screen is populated with the image of the array of radio telescopes, the story behind the mission of the make believe organization and the radio wave monitor. It will give you context and backstory for the experience. The sound waves jump to life as you speak and as the alien answers – this is the only animated feature on screen.

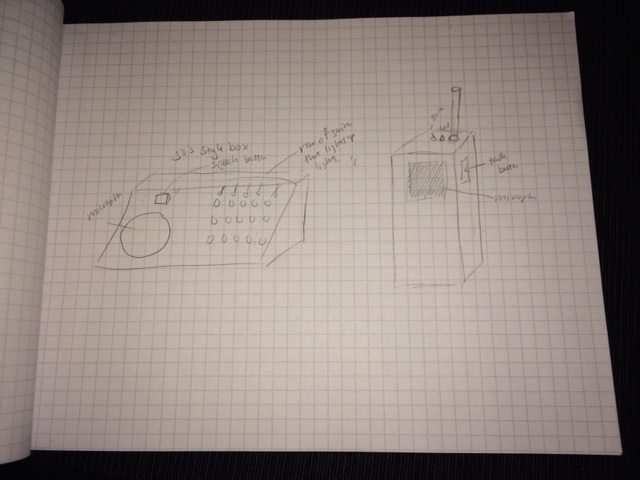

To speak to the alien, you press the button on your walkie talkie and speak into the mic. The sound waves appear on the screen, are sent into space and then the alien responds to you. His words are also shown on the radio wave monitor. He can describe his planet, his home, his every day life, many things, but if you ask him something he doesn’t understand, he will let you know that as well.

But I am still undecided as to what the mic should look like. Should it be a walkie talkie or a 50s sci fi movie type device?

Since this encounter is purely entertainment and experience driven, the design should facilitate the ease of use, but it should also add to the world of the narrative. I must confess the alien’s story is not written yet so some of these decisions will be based on our play user testing today in class and some will be decided as the story comes to mind. BUT my first fear was how can I get it to work?!!!

SYSTEM GUIDE:

The radio/walkie talkie part will have a blue tooth microphone in it. There will be a switch and a light to indicate that it is on. Then you must press a button while you speak. I believe this is pretty self-explanatory for people since walkie talkies and intercoms all require you to press the button when you speak, but it is something I need to think about during user testing.

Inside the communication device there will also be a soldered breadboard, some wiring to the button and LED, an arduino if the blue tooth piece is not enough to communicate to the computer (but this might not be necessary), a battery and battery holder to power it – the battery should be easy to reach to replace. I am not sure whether a wireless mic – for example a reverse engineered blue tooth ear bud will be enough or if I need to get a blue tooth transmitter and a receiver – the receiver to be attached to the computer. I will probably start by hard wiring it to make sure it works before switching to wireless.

The hardest part in my mind is the code. It is bordering on the most basic AI technology which puts it far from my level of coding! The first step was trying to figure out how to turn speech to text in Java Script. One friend mentioned the Google API for Siri and another sent me this link which looks not too difficult (famous last words).

http://shapeshed.com/html5-speech-recognition-api/

It allows you to use html5 to turn speech into text in Java Script. I need to figure out quite a bit more on the code side of things, but my plan is to be able to search the text for key words that launch specific pre-recorded mp3 responses. If the user asks a question that does not have any key words linked to an answer, the alien will respond that he does not understand the question. Basically I need a response so it does not break when I have no answer. I expect the coding on this will be quite difficult, but I will learn a ton which is a big part of my desired goal with the project.

In my research for this, I am reading up on and interacting with everything from chatbots online to the God Helmet. I must say the reading for this project is interesting at every turn! But to make this truly work, I have many questions to be answered during our play testing in class.

QUESTIONS:

- Is pressing a button next to a microphone something you automatically understand to do? Would you know you need to hold the button for the extent of your speech or is this something I would need to better explain?

- What would you want to ask an alien? (This one is extraordinarily important because in order to make this a good experience, I need to pre-determine what the majority of people will want to ask him so I can write the answers!)

- Is it important to you to see/hear that the system is actively translating from alien speak to make it real or would you rather it do it automatically and put an effect on the voice a la Darth Vader?

- Do you want the interaction to be comic or sincere? Basically should the experience have a funny or slightly creepy feel?

- Do you want to see the alien? My first thought was to build a 3D model of the alien and have him animate slightly as he speaks (this would be very hard). BUT these are radio telescopes. Would face timing be realistic at all? Which made me think of a police artist sketch. Once we figured out a way to translate from alien to English – which we are claiming has already occurred – the words would exist for the alien to describe himself. Therefore there could be an “artist sketch” of him. Would that make more sense?

BOM (Bill of Materials):

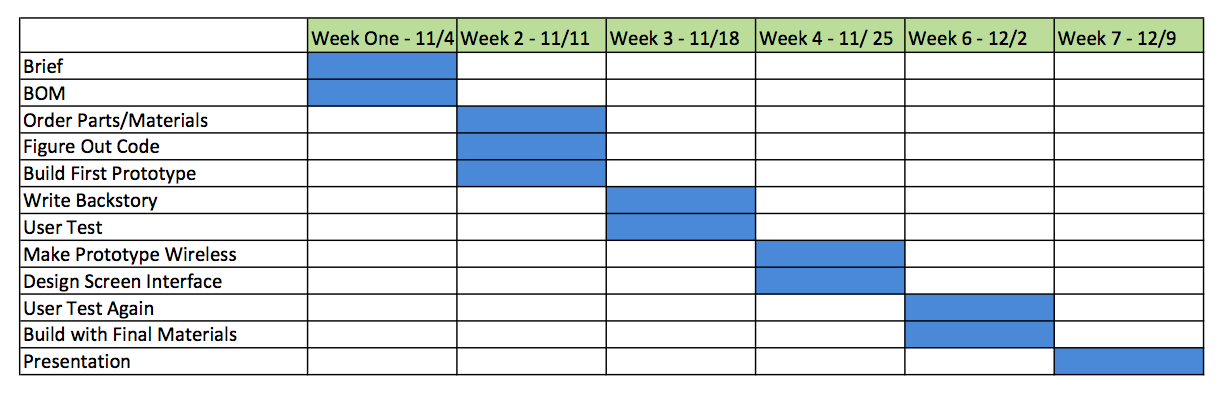

TIMELINE: